- Executive Summary

- The Challenge: Observability Tech Debt at Enterprise Scale

- Diagnosis: Understanding the Root Causes

- Transformation Framework: The Three-Pillar Approach

- Architecture Deep Dive: Before and After

- Implementation Methodology

- Business Impact and Results

- Governance and Self-Service Model

- Lessons Learned and Recommendations

- Industry Applicability and Future Outlook

Executive Summary

At a leading automotive remarketing B2B service provider, a Splunk Enterprise deployment processing 3-5 TB of log data daily across 20 business units and 100+ application teams had accumulated critical technical debt. The seven-figure annual run-rate was climbing, query performance was degrading, and engineering teams were building shadow logging solutions to work around a platform they no longer trusted.

Over 12-18 months, a lean team of 4 engineers re-architected the entire pipeline, introducing Cribl Stream as an intelligent routing layer with tiered Amazon S3 storage, all managed through Terraform IaC on AWS. Results: 80%+ TCO reduction, ~91% query performance improvement (14m 47s down to 1m 22s), 40% MTTR reduction for P1 incidents, and daily ingest reduced from 3-5 TB to ~700 GB, all without loss of operational visibility.

1. The Challenge: Observability Tech Debt at Enterprise Scale

The organization's Splunk deployment had grown organically across 20 business units and 100+ application teams with no framework to manage data economics at scale. The result was observability tech debt:

| Dimension | State at Project Initiation |

|---|---|

| Daily Log Ingest Volume | 3-5 TB/day |

| Business Units Supported | 20 |

| Application Teams | 100+ |

| Annual Platform Run-Rate | Seven-figure ($1M-$2M+) |

| Compliance Framework | Enterprise Audit / Regulatory Compliance |

| Industry | Automotive / Fleet / Financial Services |

| Retention Policies | Mixed / Inconsistent across BUs |

Symptoms of Critical Tech Debt

Performance Degradation: Search queries routinely exceeded 5-minute timeout thresholds. Service owners waited 10-15 minutes for diagnostic queries that should complete in seconds. Critical dashboards timed out during peak hours.

Engineering Trust Erosion: Teams lost confidence and built shadow logging solutions: ad-hoc ELK stacks, local file exports, alternative telemetry routing. This created blind spots in incident response and compliance coverage.

Economic Unsustainability: The seven-figure annual run-rate, compounded by heavy professional services fees, led leadership to question whether the platform should be replaced entirely.

When a service owner told our team that a critical 15-minute diagnostic query was "just how Splunk works," we knew the problem had moved beyond technology. The organization had normalized failure.

2. Diagnosis: Understanding the Root Causes

A comprehensive audit revealed the tech debt was a convergence of data, architectural, and cultural failures:

Data Quality Crisis: Approximately 30-40% of ingested data provided zero operational value: debug logs from decommissioned apps whose forwarders were never removed, duplicated event streams, chatty health checks with no alerting dependencies, and middleware left at DEBUG level in production.

Architectural Entropy: No consistent pipeline design across 20 BUs. Log data entered through a patchwork of Universal Forwarders, Heavy Forwarders, and HEC endpoints with no centralized routing, filtering, or enrichment layer.

Governance Vacuum: Indexes retained data 13+ months without compliance justification. No data classification meant debug logs consumed the same costly tier as regulatory audit trails. No ownership model, no onboarding process, no decommission mechanism.

3. Transformation Framework: The Three-Pillar Approach

Three interdependent pillars, deliberately sequenced: data intelligence first, architecture second, culture third. You cannot optimize what you do not understand, and you cannot sustain what your teams do not embrace.

Pillar 1: Data Intelligence & Governance

Every data source was classified into tiers based on operational criticality, compliance requirements, and consumption patterns:

| Tier | Classification | Routing Destination | Retention |

|---|---|---|---|

| Tier 1 | Mission-Critical / Regulatory Audit | Hot Storage (Splunk Indexes) | Per compliance mandate |

| Tier 2 | Operational / Active Monitoring | Hot Storage (Splunk Indexes) | 30-90 days |

| Tier 3 | Historical / Low-Frequency | S3 Tiered Storage (by retrieval frequency) | 13 months |

| Tier 4 | Zero-Value / Decommissioned | Filtered at Source (Dropped) | N/A |

Pillar 2: Intelligent Routing Architecture

Cribl Stream was inserted between log sources and Splunk as a centralized control plane. Data could be inspected, classified, and routed based on content, source, and tier, all before incurring indexing costs, with no changes required to source applications.

Pillar 3: Cultural & Operational Evolution

Transitioning from "log everything, sort later" to disciplined data management required: self-service capabilities within guardrails, automated classification and retention policies, cost/performance feedback loops for each team, and a data governance committee with BU representatives.

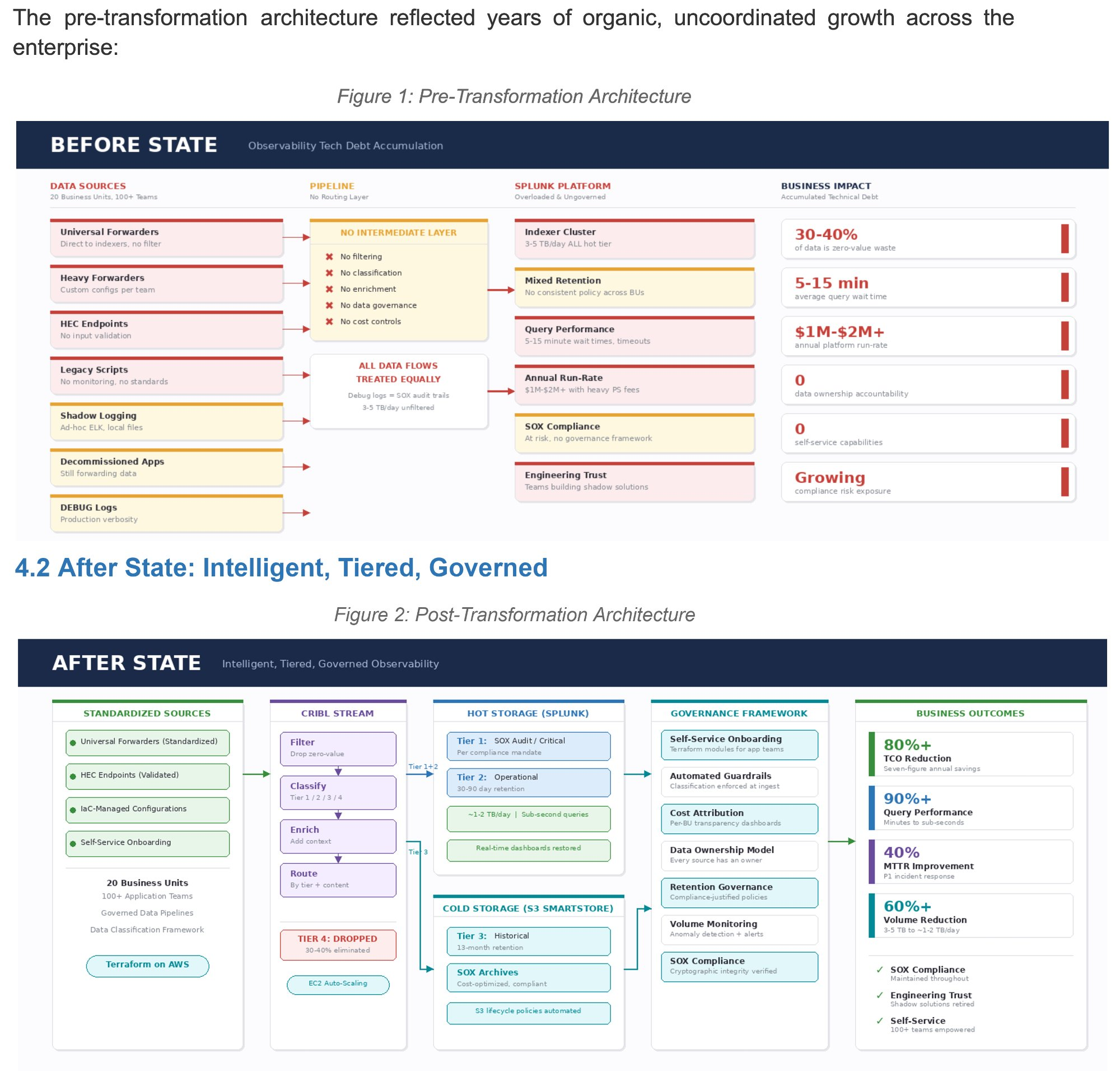

4. Architecture Deep Dive: Before and After

Before State: Uncontrolled Organic Growth

No intermediate processing layer between sources and indexers. Debug logs consumed the same expensive resources as compliance-critical audit trails. As volumes grew, query performance degraded, eroding engineering trust, driving shadow solutions, further fragmenting the landscape.

After State: Intelligent, Tiered, Governed

- Tier 4 (zero-value) dropped before reaching Splunk, eliminating 30-40% of volume immediately

- Tier 1 & 2 flow to Splunk indexes for real-time querying with optimized retention

- Tier 3 routed to Amazon S3 tiered storage by retrieval frequency

Infrastructure logs follow CISO-defined retention policies; application logs adhere to BU-specific policies. S3 lifecycle policies automate tier transitions and purging.

Infrastructure as Code: Terraform on AWS

| Component | Terraform-Managed Configuration |

|---|---|

| Cribl Stream Workers | EC2 fleet with auto-scaling groups via Terraform modules |

| Splunk Indexer Cluster | Right-sized cluster with S3-tiered storage |

| S3 Storage Tiers | Lifecycle policies, encryption, access controls, retention automation per CISO/BU policy |

| Network & Security | VPC, security groups, IAM roles, cross-account access |

| Monitoring & Alerting | CloudWatch for infrastructure health and capacity planning |

Teams onboard new log streams through standardized Terraform modules, reducing provisioning from weeks to hours while ensuring governance compliance.

5. Implementation Methodology

Four phases over 12-18 months, executed by a lean team of 4 engineers:

Phase 1: Discovery & Assessment (Months 1-3) - Full audit of all data sources, pipelines, and consumption patterns across 20 BUs. Mapped every forwarder and HEC endpoint, cataloged every index and retention config, interviewed service owners, and documented compliance requirements with internal audit and legal.

Phase 2: Architecture & Proof of Concept (Months 3-6) - Cribl Stream pilot with two high-volume BUs. Validated the classification framework, demonstrated production filtering/routing, established performance baselines, and confirmed audit trail integrity through the routing layer.

Phase 3: Phased Rollout (Months 6-14) - Wave-based production rollout prioritized by volume and criticality. Each wave: classify sources, implement Cribl routes, validate compliance integrity, cutover with rollback capability, decommission legacy configs.

Phase 4: Optimization & Self-Service (Months 14-18) - Self-service Terraform modules, automated classification guardrails, BU-level data economics dashboards, and governance committee structure.

Challenges

Organizational Resistance: Teams raised a pointed concern: "Are we just walking from one tech debt to another with a different name?" Rather than dismissing it, the team addressed it with transparent metrics and before-and-after performance comparisons at each rollout wave.

Migration Risk: Every wave included full rollback capability. A tiered support model handled escalation: self-service docs for Tier 1, core engineering for Tier 2, project lead and governance committee for Tier 3 (compliance/architectural decisions).

6. Business Impact and Results

Financial: 80%+ TCO reduction through eliminating zero-value data at the Cribl routing layer, right-sizing the indexer cluster, moving long-term retention to S3, and reducing professional services dependency via self-service IaC.

Query Performance: ~91% improvement. A critical diagnostic query went from 14 minutes 47 seconds to 1 minute 22 seconds. Driven by reduced index sizes, optimized data models, S3 offloading of historical data, and elimination of resource contention from zero-value processing.

Data Volume: 3-5 TB/day reduced to ~700 GB through a disciplined principle: cut down noise, retain signal, establish actionable alerts. An ongoing monitoring gate with automated anomaly detection and quarterly reviews ensures sustained data hygiene.

Incident Response: 40% P1 MTTR reduction from faster queries, higher signal quality, restored platform trust (teams abandoned shadow solutions), and improved alert fidelity.

The true metric of success was not the spreadsheet. It was the relief on a service owner's face when a critical diagnostic query that used to take nearly 15 minutes finished in under 90 seconds. That is when technical leadership compounds into organizational trust.

7. Governance and Self-Service Model

Designed to be sustainable without the original project team:

| Capability | Mechanism | Governance Guardrail |

|---|---|---|

| New Log Source Onboarding | Terraform Module Request | Auto-classified per tiering framework |

| Retention Policy Changes | Governed Configuration | Must cite compliance justification |

| Data Volume Monitoring | Self-Service Dashboard | Alerts at 80% of allocated budget |

| Pipeline Modifications | Cribl Stream Routes | Peer-reviewed, IaC version-controlled |

| Index Creation | Standardized Request Process | Naming conventions, lifecycle policies enforced |

Governance enforced through automation: unclassified data quarantined (not dropped) at the Cribl layer, Terraform-managed retention policies, volume anomaly alerting, and per-BU cost attribution dashboards.

Compliance and Security

- PII masking at the Cribl routing layer before data reaches any storage tier

- Documented chain-of-custody for all classification and retention decisions

- Automated compliance reporting across all tiers

- Quarterly audit reviews with internal audit and legal

- Per-team security certification of PII masking standards before production deployment

8. Lessons Learned and Recommendations

Start with Data Intelligence, Not Architecture. Understanding your data must precede tooling decisions. The Phase 1 audit revealing 30-40% zero-value ingest shaped every subsequent architectural choice.

Lean Teams with Clear Ownership. 4 engineers transformed infrastructure serving 20 BUs and 100+ teams. A small team with direct access to decision-makers and no coordination tax moved faster than any program office could have. The constraint was a strength: every engineer owned end-to-end delivery for their rollout waves.

Culture Change Requires Self-Service, Not Mandates. "Log less" mandates breed resentment. Transparent cost attribution and self-service tools create natural incentives for data hygiene.

Resistance is Signal, Not Obstacle. Pushback about "trading one tech debt for another" forced stronger governance, clearer metrics, and more transparent processes. The architecture was better because of the scrutiny.

Tactical Recommendations

Splunk users: Start with Monitoring Console App for baselines. Identify top 10 sourcetypes by volume and assess value. Evaluate S3 tiered storage for retention > 30 days. A data routing layer is the single highest-ROI investment.

Any platform: The three-pillar framework (Data Intelligence, Intelligent Routing, Cultural Evolution) is platform-agnostic. Democratized ingestion without governance always leads to unsustainable cost growth.

- Retention > 13 months without documented compliance justification

- Query performance degrading quarter over quarter

- Platform costs rising 20%+ annually with flat capabilities

- Shadow logging solutions emerging across teams

- No data ownership model or cost attribution by BU

- Professional services fees exceeding 15% of platform costs

9. Industry Applicability and Future Outlook

While this case study originated in automotive remarketing, the patterns are universally applicable. Financial services (PCI-DSS, audit retention), healthcare (HIPAA), and high-growth tech companies all face analogous data classification and cost challenges.

AI/ML-Driven Data Intelligence: ML models for automated classification, anomaly detection, and predictive cost optimization. The governance framework here provides the labeled dataset to train these models.

OpenTelemetry: Vendor-neutral standards reinforce the value of a routing layer. Decoupling collection from storage enables multi-destination strategies without re-instrumenting applications.

FinOps for Observability: Cost attribution, data economics, and BU accountability are becoming industry-standard. This self-service governance model is an early implementation of that approach.

Infrastructure: AWS Cloud | Terraform IaC | EC2 Auto-Scaling | VPC + IAM | CloudWatch | S3 Lifecycle Policies

Data Pipeline: Cribl Stream (filter, classify, enrich, route)

Hot Storage: Splunk Enterprise (Tier 1 & 2)

Cold Storage: Amazon S3 SmartStore (Tier 3)

Governance: Self-service Terraform modules, automated guardrails, cost attribution dashboards